Here’s a very simple Bash script that scans for viruses in files inside all folders in /var/www. I use it primarily to scan uploaded files on websites hosted there.

Category: Open Source Page 2 of 11

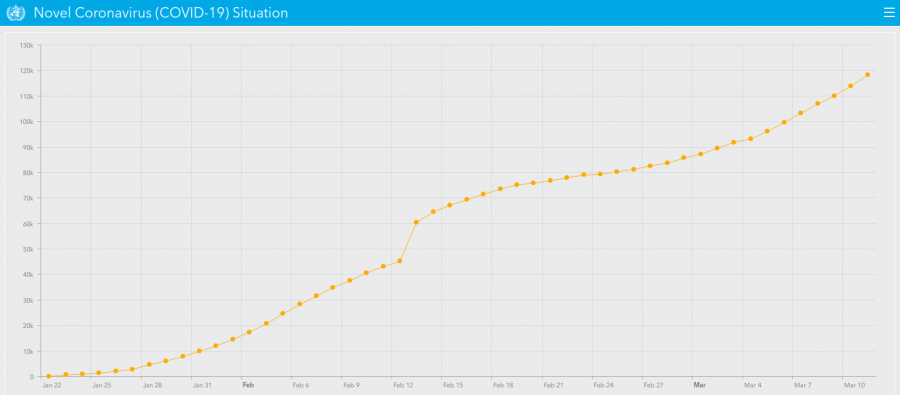

In a NY Times interview, mathematician Adam Kucharski explained how a contagious disease like the Coronavirus Disease 2019 (aka COVID-19) is spread and which of the figures that keep popping up in coronavirus news we need to pay attention to.

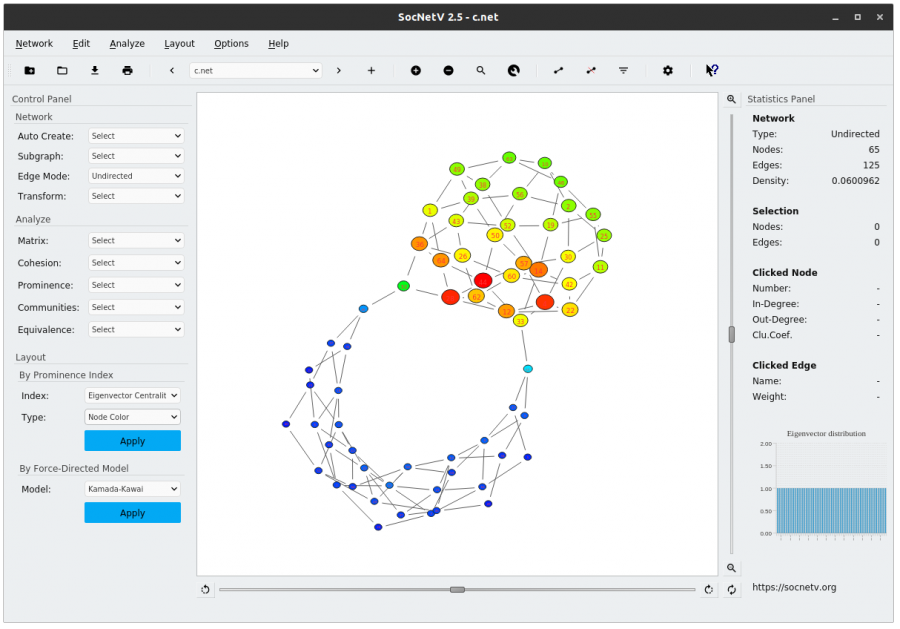

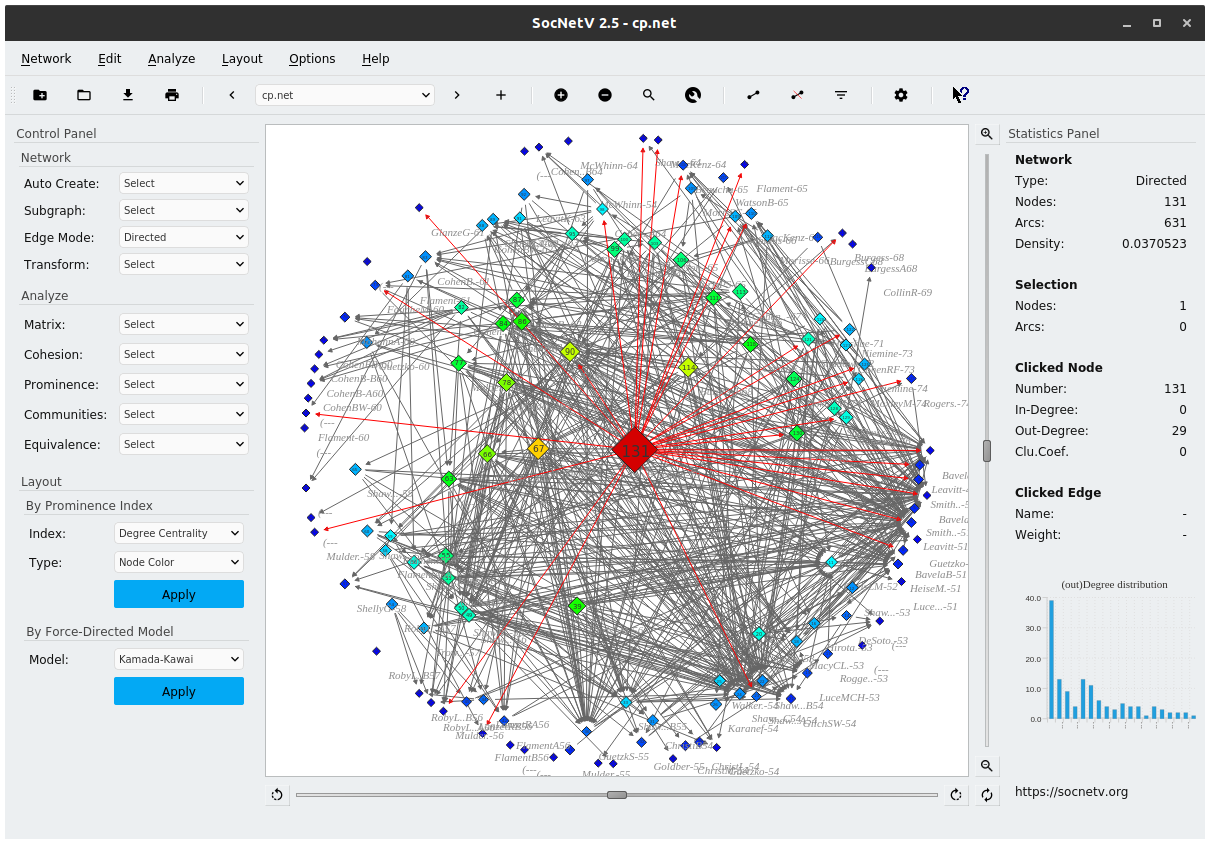

The Social Network Visualizer project happily released today a brand new version of our favorite social network analysis and visualization tool. SocNetV version 2.5, codenamed “maniac”, brings new features and improvements (i.e. custom node icons) and it is as usually available for Windows, MacOS and Linux. Go to the project’s Downloads page and get it!

This article is a quick how-to create a Bootstrap subtheme for Drupal 7, as described here and here, to be able to work with the Sass CSS preprocessor. If you don’t know what Sass is and does, read this introductory guide.

The Social Network Visualizer project has released a brand new version of our favorite social network analysis and visualization software application. SocNetV version 2.4, released on Feb 28, is a major upgrade bringing lots of new features, such as Kamada-Kawai FDP layout. The new version is available for Windows, Mac OS X and Linux as usual. Linux user may download a very light AppImage — just click it to run the app!

See a brief discussion of the new features and changes in version 2.4 at:

http://socnetv.org/news/?post=socnetv-24-released

Note: This article is old and the information is obsolete. Please use Let’s Encrypt to get Free SSL/TLS Certificates.

This how-to describes the process to obtain 100% free, signed SSL Certificates from StartSSL.com and configure Apache in Debian to use these SSL certificates in your virtual domains, so that people can access your site(s) through https (i.e. https://example.com) without ever having the browser tell them the scary “this site isn’t trusted” anymore.

Before you start, you will need:

- One or more of your functional domains which point to a server you own and have root access to. In this tutorial I’ll be using my wife’s supersyntages.gr as testbed.

- A browser, for this tutorial Chrome, although you might prefer Firefox (see below why).

- Root access to your server with Debian and Apache installed, with the above domains configured as VirtualHost.

- The openssl package installed in your computer.

- One of the following emails configured in each of the domains you want to include in the SSL certificates:

[email protected]

[email protected]

[email protected]

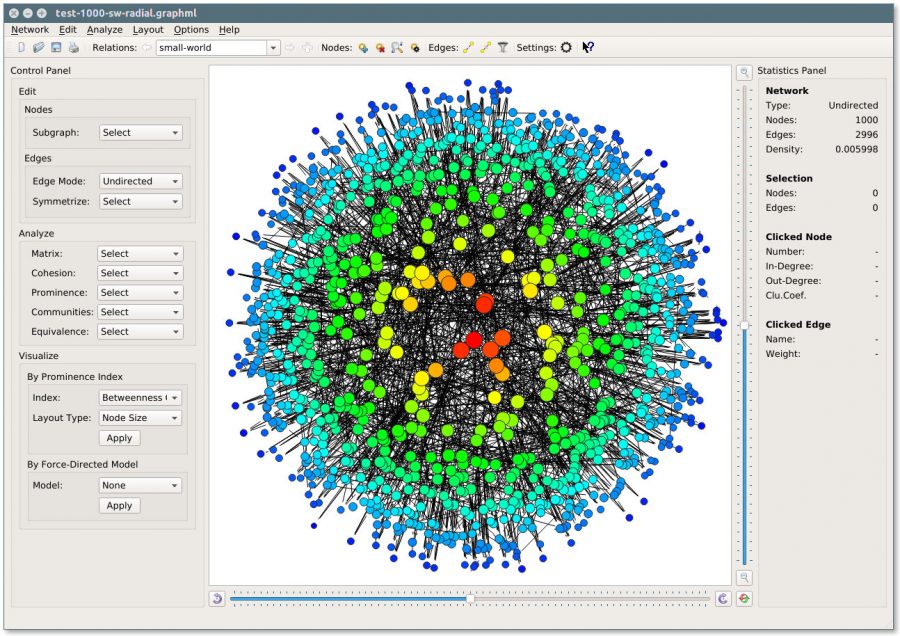

Your favorite social network analysis & visualization free software project, Social Network Visualizer, has released a new version. SocNetV v2.1 has the quite eloquent codename “fixer” and it is available for Windows, Mac OS X and Linux from the project’s Downloads page. See some nice screenshots of SocNetV in action.