What is Varnish?

Varnish Cache or just Varnish is an open-source HTTP accelerator and caching reverse proxy for web servers, like Apache or nginx, hosting content-heavy dynamic web sites. In non-geek speak, Varnish is an free program which runs in front of your web server (which in this context is called backend or origin) and stores (caches) a copy of each webpage served by the web server. When a user requests a cached page, Varnish steps in and serves the cached copy instead of requesting the same page again and again from the backend server. So Varnish is ideal for developers who run high traffic sites with lots of visitors and like their web application to be highly available and running fast. This how-to is an attempt to present a comprehensive but simple introductory guide to Varnish configuration for web developers who want to scale their projects gracefully.

To your visitors, Varnish is completely transparent – it only reveals its presence through “server response headers” (which can be disabled). This is because, Varnish sits in front of your web server, acting as the web server. Thus, it accepts all incoming connections/requests from the visitors’ browsers and serves the requested content and resources (i.e. images) from its cache if they exist there, without informing the user about its presence. If a page or resource is not in the cache at all or it has “expired” (see “What is TTL?” in the end of the article), then (and only then) Varnish passes the request to the origin server, and it does it transparently again.

In essence, Varnish is a state of the art web accelerator. In the words of its own developers: “Its mission is to sit in front of a web server and cache content. It makes your web site go faster.” How much faster? Varnish is really fast. It can speed up content delivery with a factor of 300 – 1000x, according to its developers while serving thousands of requests per second with modest equipment. In fact, Varnish old version 2 has been measured to serve 275.000 requests per second from a single 64bit server.

Thus, Varnish:

- Accelerates the delivery of your webpages to your visitors.

- Reduces the amount of traffic to your web server and, in effect, minimizes both CPU usage and database access by the server.

- Helps you achieve the High-Availability Holy Grail at the minimum cost, that is serve thousands of pageviews simultaneously using relatively cheap hardware

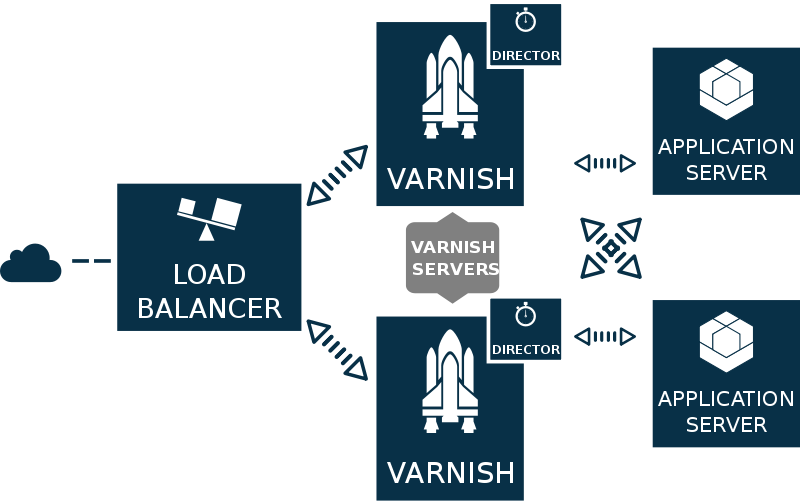

Scaling out

Scaling in terms of web development is the ability of a website/server to handle and serve a larger-than-its-built-for amount of requests and data. There are two common ways to scale a website: Vertical and Horizontal Scaling. In Vertical Scaling (or Scaling Up) the developer increases the resources of a single web server by adding lots of RAM GigaBytes, upgrading the CPU to the higher and most expensive possible and replacing the drives with much faster and much more expensive ones (i.e SSD) in order to be able to handle more traffic than before. Apparently, this costs a lot… Contrary to that, in Horizontal Scaling (or Scaling Out) the developer incorporates more than one much cheaper, off-the-shelf dedicated servers to the overall system. To achieve the same results in terms of speed and scalability as in Vertical Scaling, Varnish can be used in one, two or more such servers acting as proxy to “offload” traffic from the main web server and serve more content, much faster and without downtime.

Note: To install and take advantage of Varnish you need at least:

- One server with at least 16GB of RAM and a modern 64 bit version of Linux or *BSD. Two or more servers are highly recommended 🙂

- root access to the Varnish server(s)

Since this article is more like an introduction to Varnish than a complete how-to High Availability, I will not go in depth about setting up multiple Varnish boxes (directors etc), but you can find useful references in the end of this text. Keep in mind that what follows has been tested on my server, a quad-core i7-3770 CPU @ 3.40GHz with 16GB of RAM, running Apache, Mysql and Varnish.

How to install Varnish

There are precompiled packages for Debian and Ubuntu which you can install. For the older Varnish 3.0, discussed here, use the following commands as root in Debian:

1 2 3 4 | curl http://repo.varnish-cache.org/debian/GPG-key.txt | apt-key add - echo "deb http://repo.varnish-cache.org/debian/ $(lsb_release -s -c) varnish-3.0" >> /etc/apt/sources.list.d/varnish.list apt-get update apt-get install varnish |

For Ubuntu setups, instead of the first two commands, use this one:

1 2 | curl https://repo.varnish-cache.org/ubuntu/GPG-key.txt | apt-key add - echo "deb https://repo.varnish-cache.org/ubuntu/ precise varnish-3.0" >> /etc/apt/sources.list.d/varnish-cache.list |

Varnish 3.0 initial configuration

In Debian/Ubuntu, Varnish is configured through two simple text files:

1 2 | /etc/default/varnish /etc/varnish/default.vcl |

The /etc/default/varnish file contains 4 configuration alternatives (commented-out):

- Alternative 1, Minimal configuration, no VCL

- Alternative 2, Configuration with VCL

- Alternative 3, Advanced configuration

- Alternative 4, Do It Yourself

In this how-to, we are using the second alternative, so go ahead and decomment the DAEMON_OPTS variable and make it look like this:

1 2 3 4 5 6 7 8 9 10 11 | DAEMON_OPTS="-a :80 \ -T localhost:6082 \ -f /etc/varnish/default.vcl \ -S /etc/varnish/secret \ -p thread_pool_add_delay=2 \ -p thread_pools=4 \ -p thread_pool_min=100 \ -p thread_pool_max=1000 \ -p session_linger=100 \ -p sess_workspace=262144 \ -s malloc,5G" |

In this piece of code, we tell Varnish to load and run with some specific options, explained below.

Keep in mind that Varnish uses [quote from the documentation] “one thread for each session” and operates with multiple pools of threads. When a connection is accepted, the connection is delegated to one of these thread pools. The thread pool will do one of the following:

- further delegate the connection to any available thread,

- put the connection on a queue (if there are no available threads) or

- drop the connection if the queue is full.

So the total number of threads you let Varnish use “is directly proportional to how many requests Varnish can serve concurrently“. Thus the thread_pool* parameters, especially thread_pool_min and thread_pool_max are critical. The thread_pools parameter is less important, though, mainly because it is only used to calculate the total number of threads. If you expect to have 1000 concurrent requests max in total, tune Varnish just for that. In the above example we expect Varnish to handle 400 concurrent requests at a minimum, which is quite high. Let’s see in detail what all these options mean and do:

- -a :80

Instructs Varnish to “listen” to port 80. This is the default port for web access. - -f /etc/varnish/default.vcl

It means: read the configuration from default.vcl (discussed below). - -p thread_pool_add_delay=2

Wait at least this long between creating new threads (default: 2ms). We need this to be both not too long and not to short, so that Varnish can create new threads fast which is essential (to avoid exhausting the queue and dropping requests) without risking threads pilling-up. - -p thread_pools=4

Number of worker thread pools (default: 2). Increasing number of worker pools is supposed to decrease lock contention. We set it to 4 for a 8-core CPU, but change it your needs (Tip: Set this to match at most the cores of your CPU). Note that “too many pools waste CPU and RAM resources, and more than one pool for each CPU is probably detrimal to performance“. The official documentation says and Varnish developers maintain that it is sufficient to keep thread_pools to 2 - -p thread_pool_min=100

Set the minimum threads in each pool to 100 (the default is 5) . We set this that high because idle threads are relatively harmless and enable Varnish to scale faster from low load situations where threads have expired. Multiply thread_pool_min by the number of thread_pools available, and you get the total threads Varnish runs on on a normal day. Use and monitor varnishstat tool to see just how many new threads your Varnish boxes actually create (check the N queued work requests n_wrk_queued). - -p thread_pool_max=1000

The maximum number of threads in each pool is set to 1000 (from 500). This means that we are going to have a maximum of 1000 x 4 = 4000 threads in total. Note that setting it too high will not increase performance and if you increase this number above 5000 you risk running into various issues. Furthermore, Varnish developers do not recommend running with more than 5000 threads. - -p session_linger=100

How long time each thread “waits” on the session to see if a new request appears right away.The default is 50ms. We let threads wait for new requests up to 100ms in order to avoid context switching when we starve the CPU. Reference from the documentation: “If sessions are reused, as much as half of all reuses happen within the first 100 msec of the previous request completing.” - -s malloc,5G

We instruct Varnish to keep data in memory rather than files (which is the default). As a rule of thumb you may use the following formula: (totalRAM-20%)G. If your varnish server has 16G RAM, you can safely allocate 12GB for varnish caching purposes alone.

To decide the memory size of Varnish cache, take into account three factors:

- how much is your most used data (i.e. for a frequently updated news site this is its frontpage along with all the pages/resources linked from it)

- how expensive is to generate an object (i.e. an image) and how much memory you have (i.e. does it make it sense to cache all images?)

- the overhead of each object stored (1k according to documentation). So if you malloc 5GB for cache, and you have lots of small objects there, Varnish may end up using 10GB…

In any case, you need to monitor how the cache is behaving using varnishstat (see “monitoring Varnish” below). Watch out the n_lru_nuked variable – if it is constantly high it means your cache size is not enough for your load and Varnish is constantly nuking objects to make space. In that case, you need to increase your cache size.

To see further description of these settings, run varnishadm and type in param.show -l in the Varnish management prompt.

Configuring default.vcl for Varnish 3.0

Let’s move to default.vcl. This is the file where we program the logic of our caching mechanism, instructing Varnish exactly how to behave when a request pops in, what to do with its cached resources, when to invalidate them, and so on.

Varnish is programmed using a language called Varnish Configuration Language, or VCL, and all programming is done through a number of predefined subroutines. Each of these subroutines is executed at a different time/stage and the code execution is done line by line. There are many subroutines to tinker with as well as definitions and access control lists (i.e. to define the backend, to deliver a requested object and so on) but Varnish developers point out that “99% of all the changes you’ll need to do will be done in two subroutines: vcl_recv and vcl_fetch.”

- vlc_recv is executed when Varnish receives a new request. Thus, in vlc_recv we instruct Varnish how to respond to each new request.

- vcl_fetch is executed when pages/resources are fetched from the backend.

Note that default.vcl already has a commented-out copy of its ‘default’ VCL logic, including all these subroutines. We will change, among others, vcl_fetch and vcl_recv, but if we did not add any code in them Varnish would execute their default logic (which is commented out but active by default).

If you like you can download my working default.vcl. Below, I analyze what it does and why.

Defining the backend server

In the top of default.vcl we put our default backend definition. This is where we tell Varnish where to find our web server (apache in this case).

1 2 3 4 5 6 7 8 9 10 11 | backend default { .host = "127.0.0.1"; .port = "8015"; .probe = { .url = "/"; .timeout = 0.3s; .interval = 1s; .window = 10; .threshold = 8; } } |

In this example, we tell Varnish that the backend server listens at 127.0.0.1 and port 8015. Yes, this is the same server Varnish runs. It is not optimal but it suffices for our little Varnish introductory how-to. In your code, just change the host and port to match your setup. Of course, as mentioned earlier, Varnish can have several backends defined and even join several backends together into clusters for load balancing purposes.

Note: Apparently you need to edit /etc/apache2/ports.conf (along with any virtual host definitions you may have) and change NameVirtualHosts and Listen directives to the match the port you specify in default.vcl, i.e.:

1 2 | NameVirtualHost *:8015 Listen 8015 |

Getting back to our default backend definition, the .probe struct sets up Backend Health Polling, that is our server health polling scheme. In essence, we tell Varnish to periodically poll (make a new TCP connection to) http://127.0.0.1:8015/ to check server status. If it gets a a 200 status code, the probe is consider good. The polling period is set to 1 sec (default is 5), and probe timeout to 0.3s (that’s how long we wait for server response). If the backend server fails to answer within 0.3s, we consider the probe failed. In any case, Varnish will poll again the server at least after 1s + 0.3s.

Also, we set window: 10 and threshold:8. The former defines how many of the last X polls Varnish will examine to determine backend status, while the latter sets the minimum polls in .window which must have succeeded in order to declare our server healthy. So, Varnish will poll the backend 10 times, every 1.3 sec, for a total at least of 10 secs. If 8 out of the 10 polls are good then the backend is considered healthy. Otherwise it is declared sick and… well, in that case we will instruct Varnish to enter grace mode (see below). Once you setup your backend, you can check Varnish backend sanity for yourself.

Defining internal network and purge/proxy hosts

We also need to have a couple of access control list (acl) definitions in our default.vcl. These are lists of hosts allowed to do certain things, i.e. purge.

First, we define an ‘internal’ acl with our whole internal network subnet: 256 hosts in the 192.168.1.x range. We will allow these hosts internal access to certain files or functions which will not be accessible from the public internet.

1 2 3 4 | acl internal { "192.168.1.0"/24; "127.0.0.1"; } |

Then, we define a ‘purge’ acl. These are the hosts that will be allowed to purge/ban content from Varnish cache.

1 2 3 4 | acl purge { "localhost"; "127.0.0.1"; } |

Finally, we might want to define another access control list (i.e. upstream_proxy) with the upstream proxies we trust to set X-Forwarded-For correctly. This is only needed if you have a proxy in front of varnish, i.e. nginx.

1 2 3 | acl upstream_proxy { "127.0.0.1"; } |

Note: “X-Forwarded-For” is header which we want to be populated with the original IP of our visitors, so that our backend CMS (drupal or wordpress) can see the original IP of each request. Varnish does exactly that in the default vcl_rcv routines, which will be executed after those we define ourselves below.

vcl_recv – Responding to incoming requests

Now let’s move on to vcl_recv, the first subroutine executed by Varnish, right after it has accepted and decoded a new request. In it we will instruct Varnish to check some conditions (for instance, the requested url req.url or the existence of some cookie) to decide what to do with the request (req). In any case, there are 4 different terminating statements or, if you prefer, options:

- pass (bypass the cache): Execute the rest of the Varnish processing as normal, but do not look up the content in cache or storing it to cache.

- pipe the request: Tells Varnish to shuffle byte between the selected backend and the connected client without looking at the content. Because Varnish no longer tries to map the content to a request, any subsequent request sent over the same keep-alive connection will also be piped, and not appear in any log.

- lookup the request in cache, possibly entering the data in cache if it is not already present.

- error – Generate a synthetic response from Varnish. Typically an error message, redirect message or response to a health check from a load balancer.

In the code below, you’ll notice a great deal of things is done through the command unset req.http.Cookie. req is the varnish alias for “the client request” and req.http.Cookie is an alias for all cookies related with that request. By default, Varnish will not cache an object coming from the backend with a Set-Cookie header present. Also, if the client sends a Cookie header, Varnish will bypass the cache and sent the request directly to the backend. But for most websites this is not optimal since they run a lot of third party services (i.e. Analytics) which use cookies, i.e. to monitor users or allow them to share content to social media. In all these cases, Varnish will not serve cached content, it will pass the request to the backend instead. To remedy this, we tell Varnish to check some condition and, if it is true, to unset the http Cookie header or strip specific unnecessary cookies.

Furthermore, it makes sense sometimes to force Varnish to completely disregard cookies (i.e. when requesting static files or when the backend is unhealthy) and directly serve cached content.

In contrast, we often have some pages/paths that should never be cached, i.e. administrative panels or content editing tools. For them, we instruct Varnish to pass the request directly to the backend.

Below you can find working code along with explanatory comments.

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 | sub vcl_recv { # Setup grace mode. # Allow Varnish to serve up stale (kept around) content if the backend is #responding slowly or is down. # We accept serving 6h old object (plus its ttl) if (! req.backend.healthy) { set req.grace = 6h; } else { set req.grace = 15s; } # If our backend is down, unset all cookies and serve pages from cache. if (!req.backend.healthy) { unset req.http.Cookie; } # ONLY IF YOU HAVE ANOTHER PROXY (i.e. nginx) IN FRONT OF VARNISH # Set the X-Forwarded-For header so the backend can see the original # client IP address. If one is already set by an upstream proxy, reuse that. # if (client.ip ~ upstream_proxy && req.http.X-Forwarded-For) { # set req.http.X-Forwarded-For = req.http.X-Forwarded-For; #} else { # set req.http.X-Forwarded-For = regsub(client.ip, ":.*", ""); #} # If the request is to purge cached items, check if the visitor is authorized # to invoke it. Only IPs in 'purge' acl we defined earlier are allowed to # purge content from cache. # Return error 405 if the purge request comes from non-authorized IP. if (req.request == "PURGE") { if (!client.ip ~ purge) { # Return Error 405 if client IP not allowed. error 405 "Forbidden - Not allowed."; } return (lookup); } # Pass directly to backend (do not cache) requests for the following # paths/pages. # We tell Varnish not to cache Drupal edit or admin pages, as well as # Wordpress admin and user pages. # Edit/Add paths that should never be cached according to your needs. if (req.url ~ "^/status\.php$" || req.url ~ "^/update\.php$" || req.url ~ "^/ooyala/ping$" || req.url ~ "^/admin" || req.url ~ "^/admin/.*$" || req.url ~ "^/wp-admin" || req.url ~ "^/wp-admin/.*$" || req.url ~ "^/user" || req.url ~ "^/user/.*$" || req.url ~ "^/comment/reply/.*$" || req.url ~ "^/login/.*$" || req.url ~ "^/login" || req.url ~ "^/node/.*/edit$" || req.url ~ "^/node/.*/edit" || req.url ~ "^/node/add/.*$" || req.url ~ "^/info/.*$" || req.url ~ "^/flag/.*$" || req.url ~ "^.*/ajax/.*$" || req.url ~ "^.*/ahah/.*$") { return (pass); } # In some cases, i.e. when an editor uploads a file, it makes sense to pipe the # request directly to Apache for streaming. # Also, you should pipe the requests for very large files, i.e. downloads area. if (req.url ~ "^/admin/content/backup_migrate/export" || req.url ~ "^/admin/config/regional/translate/import" || req.url ~ "^/batch/.*$" || req.url ~ "^/dls/.*$" ) { return (pipe); } # Stop people playing around with our website. # Do not allow outside access to cron.php or install.php or server-status. # from IPs not included in 'internal' acl. # Do not allow DFind requests if ( ( (req.url ~ "^/(cron|install)\.php$" || req.url ~ "^/server-status.*$" ) && !client.ip ~ internal ) || req.url ~ "^/w00tw00t" ) { # Varnish may throw error 404 directly along with a nice message. error 404 "Page not found. You are out of your territory, man..."; # Or if you like a custom Drupal error page you've defined at the path "404". # set req.url = "/404"; } # As mentioned before, remove all cookies for static files, images etc # Varnish will always cache the following file types and serve them (during TTL). # Note that Drupal .htaccess sets max-age=1209600 (2 weeks) for static files. if (req.url ~ "(?i)\.(bmp|png|gif|jpeg|jpg|doc|pdf|txt|ico|swf|css|js|html|htm)(\?[a-z0-9]+)?$") { // Remove the query string from static files set req.url = regsub(req.url, "\?.*$", ""); unset req.http.Cookie; # Remove extra headers # We remove Vary and user-agent headers that any backend app may set # If we don't do this, Varnish will cache a separate copy of the resource # for every different user-agent unset req.http.User-Agent; unset req.http.Vary; return (lookup); } # FORCE CACHING OF A DOMAIN # if you have misbehaving sites, which set cookies making Varnish not # functioning, you can force Varnish to cache them. # I.e. to force caching a drupal 6 misbehaving linuxinsider.gr uncomment # commands below. They check if the requested host is the misbehaving one, # and if there are no cookies left (SESSION or NO_CACHE for drupal, see above), # then we cache the page. You may want to add a relevant entry in vcl_fetch # that sets a particular ttl for that domain # if (req.http.host ~ "(www\.)?linuxinsider\.gr" && req.http.Cookie) { # unset req.http.Cookie; # } # Handle cookies. Because Varnish will pass (no-cache) any request with cookies, # we want to remove all unnecessary cookies. But instead building a "blacklist" # of cookies which will be stripped out (a list that apparently needs maintenance) # we build a "whitelist" of cookies which will not be excluded. # In the case of Drupal 7, these cookies are NO_CACHE and SESS # All other cookies will be automatically stripped from the request. # Drupal always set a NO_CACHE cookie after any POST request, # so if we see this cookie we disable the Varnish cache temporarily, # so that the user sees fresh content. # Also, the drupal session cookie allows all authenticated users # to pass through as long as they're logged in. # Comments inside the if statement explain what the commands do. if (req.http.Cookie && !(req.url ~ "wp-(login|admin)")) { # 1. Append a semi-colon to the front of the cookie string. set req.http.Cookie = ";" + req.http.Cookie; # 2. Remove all spaces that appear after semi-colons. set req.http.Cookie = regsuball(req.http.Cookie, "; +", ";"); # 3. Match the cookies we want to keep, adding the space we removed # previously, back. (\1) is first matching group in the regsuball. set req.http.Cookie = regsuball(req.http.Cookie, ";(SESS[a-z0-9]+|NO_CACHE)=", "; \1="); # 4. Remove all other cookies, identifying them by the fact that they have # no space after the preceding semi-colon. set req.http.Cookie = regsuball(req.http.Cookie, ";[^ ][^;]*", ""); # 5. Remove all spaces and semi-colons from the beginning and end of the # cookie string. set req.http.Cookie = regsuball(req.http.Cookie, "^[; ]+|[; ]+$", ""); if (req.http.Cookie == "") { # If there are no remaining cookies, remove the cookie header. If there # aren't any cookie headers, Varnish's default behavior will be to cache # the page. unset req.http.Cookie; } else { # If there are any cookies left (a session or NO_CACHE cookie), do not # cache the page. Pass it on to Apache directly. return (pass); } } # Handle compression correctly. Different browsers send different # "Accept-Encoding" headers, even though they mostly all support the same # compression mechanisms. By consolidating these compression headers into # a consistent format, we can reduce the size of the cache and get more hits. # @see: http:// varnish.projects.linpro.no/wiki/FAQ/Compression if (req.http.Accept-Encoding) { if (req.http.Accept-Encoding ~ "gzip") { # If the browser supports it, we'll use gzip. set req.http.Accept-Encoding = "gzip"; } else if (req.http.Accept-Encoding ~ "deflate") { # Next, try deflate if it is supported. set req.http.Accept-Encoding = "deflate"; } else { # Unknown algorithm. Remove it and send unencoded. unset req.http.Accept-Encoding; } } #return (lookup); } |

Note the commented-out return (lookup) in the end of the vcl_recv function. This is done so because when we terminate vcl_recv using one of the terminating statements (pass, pipe, lookup, error), its default logic is completely overridden. We do not want this since most of that default logic in vcl_recv is needed for a well-behaving Varnish server.

Grace mode in Varnish speak means delivering otherwise expired objects when we need to. For instance, we might want Varnish to deliver pages from its cache when:

- Our backend is down/sick or misbeheaving

- A different thread has already made a request to the backend that’s not yet finished.

In such cases, we cat tell Varnish to enter ‘grace mode’ and serve so-called ‘stale’ content (expired but kept around in cache). To do this, we need to instruct Varnish a) how long it will keep expired content in cache and b) for how long it will serve stale content to visitors. The former will be defined in vcl_fetch (see below) while the latter has been already defined in vcl_recv above, with the following lines:

1 2 3 4 5 6 7 8 9 | sub vcl_recv { ... if (! req.backend.healthy) { set req.grace = 6h; } else { set req.grace = 15s; } .... } |

With the above code we instructed Varnish to continue serving expired objects until they are 6 hours old (past their expiry time – TTL) when the backend is not healthy, otherwise to serve expired objects only for 15s beyond their TTL. You can set req.grace higher to ensure delivery of some, even old, content when the backend is down. Note that you need to have enabled Backend Polling (we did earlier in default.vcl’s default backend definition) – otherwise Varnish will always consider the backend healthy.

For grace to kick in we also need to define how long Varnish will allow items to be stale (expired but kept in cache). So in vcl_fetch we will put this code:

set beresp.grace = 6h;

This commands Varnish to keep all objects 6 hours in cache past their expiry time (TTL). In our setup, the TTL of all resources in Varnish cache is controlled by the backend which shall set a “Cache-Control: max-age” header to 10 minutes (see below the explanation of TTL and how we setup the backend to set the correct header). Thus the above line of code says that all objects will be definitely purged from cache after 10 minutes plus 6 hours.

In the end, the above setup has the following consequences:

- When the backend is alive and healthy, each piece of content will be cached for TTL + 30s, in our case for a total of 10′ and 30” (Cache: HIT). After that, Varnish will refresh it from the backend (Cache: MISS, and the all clients will have to wait for it to finish).

- When the backend is down or misbehaving, all content will stay in cache for TTL + 6h, equaling to 370 minutes in our case. After that, we better have our backend back online, or our pages will be inaccessible to visitors.

High-traffic website admins should carefully select the grace duration, because it may unexpectedly impact the user experience even when the backend is healthy. For instance, as Varnish wiki states, if a page or object has 1000 requests per second then and Varnish needs 2 seconds to fetch and refresh it from the backend, then 2000 clients may wait for it.

Finally note that there is no point setting beresp.grace < req.grace. Because beresp.grace is the maximum grace period of every each object, it should be set to the maximum value we ever want to set req.grace to.

vlc_fetch – Fetching content from backend

The vcl_fetch function is executed after Varnish has successfully retrieved an object from the backend. It is used for tasks like altering the response headers, triggering ESI processing, trying alternate backend servers etc. By default, vcl_fetch avoids caching anything with a set-cookie header. The most commons actions to return from vcl_fetch are two:

- hit_for_pass: Like pass we used in vcl_recv, it tells Varnish to not cache the object but also creates a ‘hitforpass’ object in the cache with TTL equal to the current value of beresp.ttl. When returning a request with hit_for_pass, it is handled by vcl_deliver, but subsequent requests will go directly to vcl_pass based on the hit_for_pass object. This means that it caches also the decision not to cache. In this way, multiple requests for the same non-cached object can be passed to the backend at the same time. It is useful when you don’t want clients to queue up waiting from the backend to respond to each one in turn, but rather hit your backend with all requests concurrently (see explanation here)

- deliver: Delivers the object to the client. If the object is not in cache it is also stored in cache.

In vcl_fetch we can use the request object, req, but also the backend response, beresp, which contains the HTTP headers from our web server.

All we do in the code below is to unset cookies for requested static files and set the grace period to 6h for expired content, as discussed earlier. We could change here the default TTL, but we do not (see why in the “Setting object TTL in Varnish” section below). We only change default TTL (beresp.ttl) to 1 hour for objects with 301 Moved Permanently response code.

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 | sub vcl_fetch { # Don't allow static files to set cookies. if (req.url ~ "(?i)\.(bmp|png|gif|jpeg|jpg|doc|pdf|txt|ico|swf|css|js|html|htm)(\?[a-z0-9]+)?$") { unset beresp.http.set-cookie; # default in Drupal, you may comment out to apply for other cms as well #set beresp.ttl = 2w; } if (beresp.status == 301) { set beresp.ttl = 1h; return(deliver); } # Allow items to be stale if backend goes down. This means we keep around all objects for 6 hours beyond their TTL which is 2 minutes # So after 6h + 2 minutes each object is definitely removed from cache set beresp.grace = 6h; # If you need to explicitly set default TTL, do it below. # Otherwise, Varnish will set the default TTL by looking-up # the Cache-Control headers returned by the backend # set beresp.ttl = 6h; # if you have misbehaving sites (i.e Drupal6 or cookie-setters) # and you have forced Varnish to cache them in vcl_recv, # here you can instruct Varnish about their ttl, and # force Varnish to strip any cookies send from backend #if (req.http.host ~ "(?i)^(www.)?linuxinsider.gr") { # unset beresp.http.set-cookie; # set beresp.http.Cache-Control = "public,max-age=602"; # set beresp.ttl = 120s; #} } |

vlc_deliver (optional)

By now, we have a functional default.vcl – go ahead and test it. To make testing easier, we will also redefine the vlc_deliver function which is called before Varnish delivers a cached object to the client. There are two valid terminating keywords in vcl_deliver:

- deliver Delivers the object to the client.

- restart Restarts the transaction and increases the restart counter. If the number of restarts is higher than max_restarts varnish emits a guru meditation error.

In the code below, we check the object’s cache hits. If they are more than zero, it means the object will be served directly from cache. In this case, we set two http headers: X-Cache-Hits with the number of hits and X-Cache with a descriptive text “HIT!”. If object cache hits are zero, it means Varnish has just fetched it from the backend so we set X-Cache with the descriptive text “MISSED IT!”.

Lastly, in this routine we may hide some headers added by Varnish, to make sure people won’t see we are using Varnish, while editing Server and X-Powered-By to add our custom spicy signatures 🙂

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 | sub vcl_deliver { # Add cache hit data if (obj.hits > 0) { # If hit add hit count set resp.http.X-Cache = "HIT!"; set resp.http.X-Cache-Hits = obj.hits; } else { set resp.http.X-Cache = "MISSED IT!"; } # Hide headers added by Varnish. No need people know we're using Varnish. remove resp.http.Server; remove resp.http.X-Varnish; remove resp.http.Via; remove resp.http.X-Drupal-Cache; # Nobody needs to know we run PHP and have version xyz of it. remove resp.http.X-Powered-By; #remove resp.http.Age; unset resp.http.Link; set resp.http.Server = "apeiro.gr"; set resp.http.X-Powered-By = "Curiosity killed the cat - read more at linuxinside.gr"; } |

To test the setup, see “Monitoring Varnish” section below.

Starting Varnish

Just type in

1 | service varnish start |

and cross your fingers. Or better see how to monitor Varnish as discussed further below…

But we haven’t finished yet! We need to setup our backend CMS (i.e. Drupal) to work with Varnish.

Drupal 7.x configuration for Varnish

For Drupal 7 to play nice with Varnish, we need to inform it that we are using an external cache. This is done in your site’s /sites/default/settings.php file. Open it and add these lines:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 | // Tell Drupal it's behind a proxy. $conf['reverse_proxy'] = TRUE; // Tell Drupal what addresses the proxy server(s) use. $conf['reverse_proxy_addresses'] = array('127.0.0.1'); // Bypass Drupal bootstrap for anonymous users so that // Drupal sets max-age < 0. $conf['page_cache_invoke_hooks'] = FALSE; // Make sure that page cache is enabled. $conf['cache'] = 1; $conf['cache_lifetime'] = 0; // Set page cache max-age to 600 secs (10 min) // Change this to the highest time possible for your needs // Drupal will set a Cache-Control: "max-age=600" header $conf['page_cache_maximum_age'] = 600; |

Note that by default Drupal .htaccess includes the command:

ExpiresDefault A1209600 |

which forces cache mechanisms like Varnish, to cache all static files for 2 weeks after access.

Tip:

If your users are mostly anonymous and you have zero or only a few registered ones (like editors and admins) you might omit the Vary: Cookie header, sent by Drupal for anonymous page views, by adding this line in settings.php$conf[‘omit_vary_cookie’] = true;

This tells to a HTTP proxy that it may return a page directly from its local cache, if the user sends the same Cookie header as the user who originally requested the cached page. This allows for better caching in HTTP proxies (including reverse proxies), i.e. even if clients send different cookies, they still get content served from the cache. But keep in mind that if you omit “Vary: Cookie” authenticated users will also be served pages from the cache. To avoid that you need to have your authenticated users access the site directly (i.e. not use an HTTP proxy, and bypass the reverse proxy if one is used) in order to avoid getting cached pages from the proxy.”

After saving your settings.php, login as admin to your site and navigate to admin/config/development/performance. There you should check that “Cache pages for anonymous users” is enabled and “Expiration of cached pages” is equal to 10 minutes (whatever you set earlier in $conf[‘page_cache_maximum_age’]). If not, check your settings again before you continue with testing Varnish as discussed below.

TTL in geek speak is an abbreviation for “Time-To-Live”. In the context of proxies, caches (like Varnish) and browsers, TTL is a numerical value which tells them for how long an object can be kept in and served from the cache. To put it simply TTL is the maximum age of an object (in cache). Thus, for Varnish caching to work as intended with our websites, all objects served need to have a TTL defined somehow, so that Varnish can decide whether it should serve them from its cache or it should fetch a fresh version from the web server.

In Varnish, objects’ TTL is set by the beresp.ttl variable (in vcl_fetch). But before Varnish runs vcl_fetch, the value of beresp.ttl is set by default through one of the following:

- The s-maxage variable in the Cache-Control response header, if set by the backend

- The max-age variable in the Cache-Control response header, if set by the backend

- The Expires response header, if set by the backend

- Lastly, the default_ttl parameter, which has a default value of 120 seconds

Note: Keep in mind that Varnish caches objects returned by the backend with specific status codes (200, 203, 300, 301, 302, 307, 410 and 404). To cache other status codes, you need to explicitly set the beresp.ttl in vcl_fetch for them. Also note that all cache mechanisms obey the directives in the Cache-Control header returned by the server (for more about Cache-Control header read this).

So, when Varnish fetches an object from the backend server, it looks for the max-age parameter (or s-maxage) in Cache-Control response header and, if it is there, sets the TTL for that object to be equal to max-age.

Only if Varnish doesn’t find any Cache-Control header or Expires, then it sets beresp.ttl to the default.ttl value. You can use the following command to check the default.ttl value for yourself

In our case, when we configured Drupal 7 to work with Varnish, we forced it to return a TTL (max-age = 600 seconds) for each page by adding this line in settings.php:

1 | $conf['page_cache_maximum_age'] = 600; |

This means that once a page has been fetched from the backend, Varnish will set its TTL=600 and keep it cached for at least 10 minutes. During that time, Varnish will not request the same object from the backend, no matter how many clients ask for it it. The cached object will be considered fresh and served from Varnish (X-Cache: Hit!), unless we explicitly purge it from cache (see below how).

In fact, because we have configured Varnish grace-mode (15s or 6h, when the server is up or down respectively), Varnish will keep objects in cache for even more: 10 min + 15 seconds when the backend is up and 10 min + 6h when the backend is down.

As mentioned above, directives in Cache-Control headers might be respected by browser caches as well. When a browser downloads an object with max-age:600 Cache-Control response header, it also caches it for 10 minutes. During that period, the browser will not request (or revalidate) the object from the web server. To change this, use s-maxage parameter which is like max-age but it applies only to public caches.

Varnish respects Cache-Control headers and passes them to the client, but also sets another header, called Age, with the number of seconds the object have been cached. Browsers use the Age header to determine how long to cache the object locally. So in our max-age-based scenario, a browser calculates the cache duration using the formula: cache duration = max-age – Age. This might become handy in situations where we want Varnish to cache objects longer time while disallowing client-side caches. In such a case, all we have to do is to set Age header > max-age in vcl_fetch.

Nevertheless, modifying the Cache-Control headers returned by the backend is not the only way to use Varnish efficiently. Another way is to explicitly set beresp.ttl in vcl_fetch as we mentioned earlier:

set beresp.ttl = 6h;

The above tells Varnish to keep all objects in its cache for at least 6h hours (plus the grace time).

Of course, we might want to set a higher TTL only for a particular section of your site i.e. product pages:

1 2 3 4 5 6 7 | sub vcl_fetch { ... if (req.url ~ "^/products/.*$") { set beresp.ttl = 5d; } ... } |

The above code will set the TTL to 5 days for all products/* pages.

Or, you might want to enforce caching of static files for a longer period, say 2 weeks. We did exactly that in our vcl_fetch implementation with the following code – as mentioned this is the default in Drupal’s htaccess so this essentially applies to any other CMS we might host:

1 2 3 4 5 6 | sub vcl_fetch { if (req.url ~ "(?i)\.(bmp|png|gif|jpeg|jpg|doc|pdf|txt|ico|swf|css|js|html|htm)(\?[a-z0-9]+)?$") { unset beresp.http.set-cookie; set beresp.ttl = 2w; } } |

varnishstat – Cache Hits/Misses and everything else

In everyday use of Varnish, you need to monitor your cache hit ratio, the percentage of client requests in that are actually served from Varnish cache. This should be a high number, in order for Varnish to take the load off the backend (but keep in mind that “pass” in vcl_recv is not considered a cache miss).

You also need to monitor crucial statistics such as how many requests/connections you are serving, how many of them end up to the backend and so on. For all these stuff, there is a very handy tool called varnishstat.

Execute it by typing:

1 | varnishstat |

Example of varnishstat output

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 | 0+03:37:56 Hitrate ratio: 10 100 1000 Hitrate avg: 0.7152 0.7034 0.5340 681508 0.00 52.12 client_conn - Client connections accepted 811320 0.00 62.05 client_req - Client requests received 795464 0.00 60.83 cache_hit - Cache hits 842 0.00 0.06 cache_hitpass - Cache hits for pass 7444 0.00 0.57 cache_miss - Cache misses 2416 0.00 0.18 backend_conn - Backend conn. success 13442 0.00 1.03 backend_reuse - Backend conn. reuses 1536 0.00 0.12 backend_toolate - Backend conn. was closed 14980 0.00 1.15 backend_recycle - Backend conn. recycles 4 0.00 0.00 backend_retry - Backend conn. retry 2 0.00 0.00 fetch_head - Fetch head 11115 0.00 0.85 fetch_length - Fetch with Length 3703 0.00 0.28 fetch_chunked - Fetch chunked 742 0.00 0.06 fetch_close - Fetch wanted close 285 0.00 0.02 fetch_304 - Fetch no body (304) 276 . . n_sess_mem - N struct sess_mem 4 . . n_sess - N struct sess 7440 . . n_object - N struct object 7642 . . n_objectcore - N struct objectcore 5803 . . n_objecthead - N struct objecthead 204 . . n_waitinglist - N struct waitinglist 2 . . n_vbc - N struct vbc 400 . . n_wrk - N worker threads 400 0.00 0.03 n_wrk_create - N worker threads created 1 . . n_backend - N backends 23216 . . n_lru_moved - N LRU moved objects 777926 0.00 59.49 n_objwrite - Objects sent with write 681652 0.00 52.13 s_sess - Total Sessions 811320 0.00 62.05 s_req - Total Requests 8411 0.00 0.64 s_pass - Total pass 15847 0.00 1.21 s_fetch - Total fetch 406640652 0.00 31098.25 s_hdrbytes - Total header bytes 10392658523 0.00 794788.81 s_bodybytes - Total body bytes 662850 0.00 50.69 sess_closed - Session Closed 172 0.00 0.01 sess_pipeline - Session Pipeline 167 0.00 0.01 sess_readahead - Session Read Ahead 180148 0.00 13.78 sess_linger - Session Linger 35250 0.00 2.70 sess_herd - Session herd 37051326 26.96 2833.54 shm_records - SHM records 2946964 26.96 225.37 shm_writes - SHM writes 22146 0.00 1.69 shm_cont - SHM MTX contention 12 0.00 0.00 shm_cycles - SHM cycles through buffer 9 0.00 0.00 sms_nreq - SMS allocator requests 3864 . . sms_balloc - SMS bytes allocated 3864 . . sms_bfree - SMS bytes freed 15852 0.00 1.21 backend_req - Backend requests made 1 0.00 0.00 n_vcl - N vcl total |

In the varnishstat output, you see on top the Varnish uptime and below that the Hitrate ratio and avg lines. The three Hitrate ratio numbers (10, 100 and 1000) are the time spans in which the avg statistic applies. So in this example, we have a 0.78 or 78% hit rate int the last 1000 seconds, which is pretty good. In fact, it should be well above 0.5.

Below hitrate, varnishstat displays a list of specific statistics in four columns. The first column is the actual raw value of the statistic, the second is its per second average, the third shows the per-second average since Varnish started and finally the fourth column is the statistic name and description. Notice that some statistics like n_sess or n_object do not have a per second average, since they are counters that can decrease as well and their change rate per second is not significant.

Using Varnishstat, you should pay attention to the following statistics:

- client_conn & client_req: There should be a ratio close to 1:10 between connections and requests (in the example, it is not so because I have ab’ed my server). Investigate if it’s far below or far above that.

- client_drop: Should be zero. Counts clients Varnish had to drop due to resource shortage.

- backend_fail: Need to be low. These are the backend connections failures, usually 503-errors.

- n_object: The total number of objects in cache.

- n_wrk: The total number of threads right now

- n_wrk_queued: counts requests queued because Varnish went out of threads. If you see this rise, it means you need more threads…

- n_wrk_drop: counts requests dropped because the queues were full. Should be zero, otherwise visitors were declined access to your sites!

- n_wrk_create: Number of threads created from start (should be close to n_wrker threads above)

- N worker threads not created: Number of threads Varnish tried to create but failed. Should be always zero!

- N worker threads limited: Number of threads Varnish wanted to create, but did not either because of max threads or the thread_pool_add_delay.

- N overflowed work requests: Requests that had to be put on the request queue. Should be fairly static after startup.

- N dropped work requests: Requests Varnish never responded to because the request queue was full. Should ideally never happen.

- N lru nuked objects: If it is constantly high it means your cache size is not enough for your load and Varnish is constantly nuking objects to make space. In that case, you need to increase your cache size. If this is zero, there’s no point to make your cache larger.

- n_expired: Number of objects expired, because they passed their ttl (or ttls was set to zero) and grace time too.

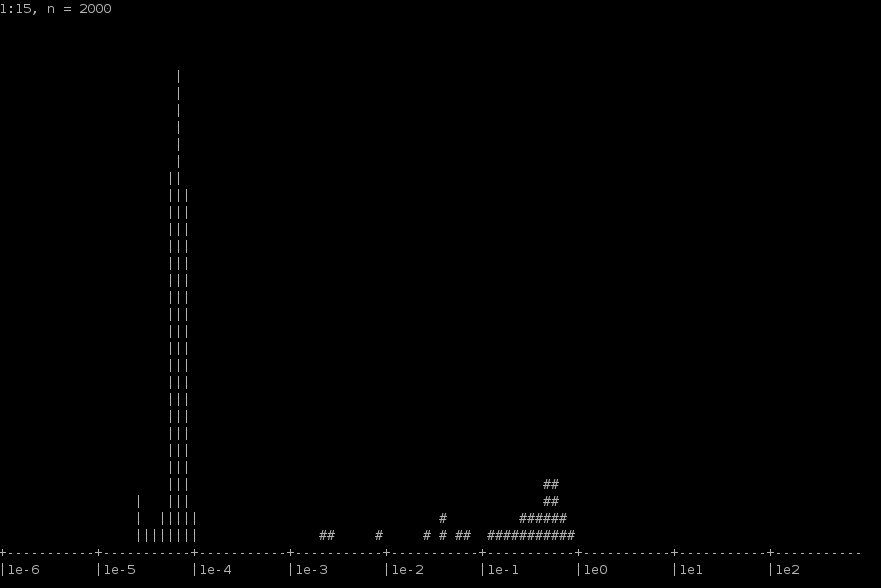

Varnishist

You can graphically monitor hits/misses with another great tool, called varnishhist. It reads varnishd shared memory logs and presents a continuously updated histogram showing the distribution of the last N requests by their processing. The value of N and the vertical scale are displayed in the top left corner. The horizontal scale is logarithmic. Hits are marked with a pipe character (“|”), and misses are marked with a hash character (“#”).

Normally you want to see 50% of the graph points with hits (|). Note also that the horizontal axis measures how long Varnish spent on each request. The further left on the X axis your requests are, the faster the overall perfomance is. Oh, and if you see requests above the 1e1 mark, it means that these request needed 10 seconds to be processed. Not good 😛

Top URLs requested from backend

You can use varnishtop to identify what URLs are hitting the backend the most. Use the command

1 | varnishtop -i TxURL |

which constantly prints out only the “TxURL”, the URL being retrieved from your backend, i.e.

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 | list length 73 1.77 TxURL /files/js/js_f18fd007c9b12ce51f1eb5c975f1cfe5.jsmin.js 1.77 TxURL /files/banners/firefoxos-banner.jpg 1.75 TxURL /files/cc_80x15.png 1.75 TxURL /files/drupal1.gif 1.75 TxURL /files/button_apache.png 1.75 TxURL /files/mysql_button.png 1.75 TxURL /files/imagecache/scale-desktop-screenshot-220px/desktop-screenshots/desktop4.jpg 1.75 TxURL /honeypot/Extras/mini_phpot_link.gif 1.75 TxURL /files/imagecache/scale-desktop-screenshot-220px/desktop-screenshots/screenshot_from_2014-06-17_170230.png 1.69 TxURL /files/imagecache/scale-desktop-screenshot-220px/desktop-screenshots/screenshot_from_2014-07-13_163211.png 1.67 TxURL /files/imagecache/scale-desktop-screenshot-220px/desktop-screenshots/my_xubuntu.png 1.65 TxURL /files/imagecache/scale-desktop-screenshot-220px/desktop-screenshots/elementary.png 1.63 TxURL /files/sxolinux-banner-285x100px.png 1.63 TxURL /sites/all/modules/advanced_forum/styles/silver_bells/images/ap_user_offline.png 1.61 TxURL /sites/all/modules/advanced_forum/styles/silver_bells/images/topic_last_post.png 1.61 TxURL /sites/all/modules/advanced_forum/styles/silver_bells/images/topic_top.png 1.59 TxURL /files/banners/oshackers.org3b.png 1.49 TxURL /?page=1229 1.33 TxURL /user/register |

The same can be done with this command:

1 | varnishlog -b | grep 'TxURL' |

The above commands capture everything that hits your backend server, including urls you have defined to be (pass)ed. If you’d rather have a verbose printout of cache misses only, use the varnishncsa tool like this:

1 | varnishncsa -F '%r %{Varnish:hitmiss}x' | grep miss |

It will constantly print requested URLs which were missed from cache and thus requested from the backend. If you like, you can redirect the output to a file, process it and present the results to a statistically meaningful way. All you need is the following two commands (you should let the first one run for a while):

1 2 3 | varnishncsa -F '%r %{Varnish:hitmiss}x' | grep miss > reqs.log # let it run for several minutes or even hours, then cat reqs.log | sort -k 1 | uniq -c | sort -k 1 -n -r | head -24 |

Here’s an example output from my server. Note that the first column shows how many times that request missed the cache:

1 2 3 4 5 6 7 8 9 10 | cat reqs.log | sort -k 1 | uniq -c | sort -k 1 -n -r | head -24 3 GET http://www.linuxinside.gr/sites/all/modules/advanced_forum/styles/silver_bells/images/topic_top.png HTTP/1.1 miss 3 GET http://www.linuxinside.gr/sites/all/modules/advanced_forum/styles/silver_bells/images/topic_last_post.png HTTP/1.1 miss 3 GET http://www.linuxinside.gr/sites/all/modules/advanced_forum/styles/silver_bells/images/ap_user_offline.png HTTP/1.1 miss 3 GET http://www.linuxinside.gr/forum/9171/pos-epilegoyme-dianomi-linux-telika-epilegoyme-dianomi-i-grafiko-perivallon HTTP/1.1 miss 3 GET http://www.linuxinside.gr/files/sxolinux-banner-285x100px.png HTTP/1.1 miss 3 GET http://www.linuxinside.gr/files/imagecache/scale-desktop-screenshot-220px/desktop-screenshots/screenshot_from_2014-07-13_163211.png HTTP/1.1 miss 3 GET http://www.linuxinside.gr/files/imagecache/scale-desktop-screenshot-220px/desktop-screenshots/my_xubuntu.png HTTP/1.1 miss 3 GET http://www.linuxinside.gr/files/drupal1.gif HTTP/1.1 miss 3 GET http://www.linuxinside.gr/files/cc_80x15.png HTTP/1.1 mis |

URLs requested from clients (live)

The following command monitors the URLs clients request from Varnish in real time:

1 | varnishtop -i RxURL |

Monitor status codes

Constantly list status codes Varnish receives from a backend with this command:

varnishlog -O -i RxStatus |

Use TxStatus instead, if you want to monitor status codes Varnish sends to clients:

1 | varnishlog -O -i TxStatus |

The same can be done with varnishtop:

1 | varnishtop -i TxStatus |

Monitor domains request your visitors

Likewise, you might want to monitor Varnish logs for requested domains that hit your backend the most:

1 | varnishlog -O -i TxHeader | grep Host |

or with varnishtop, sorted by their “popularity”:

1 | varnishtop -i TxHeader -C -I ^Host |

This is a nice way to find out possible setup problems in the backend CMS. If you see a domain to dominate the list, it might be that its CMS is misconfigured – or not configured at all to return max-age in its response headers, and hence Varnish does not cache it all (yes, this has happened to yours trully :))!

Contrary to that, you might want to see all your domains requested by visitors:

1 | varnishtop -i RxHeader -C -I ^Host |

Object age

Another useful check is the age of its object. Varnish adds an Age header to indicate how long the object has been kept inside Varnish. You can grep out Age from varnishlog like this:

1 | varnishtop -i TxHeader -I ^Age |

Monitoring specific URLs

When you want to monitor all requests for a specific URL, use varnishlog with -m parameter which takes regex and lists only the transactions that match the regex. You can have multiple -m options like in the following example. This commands lists the client requests for the frontpage of dimitris.apeiro.gr (change this to your site).

1 | varnishlog -c -m "RxURL:^/$" -m 'Hash:^dimitris.apeiro.gr$' |

While the following command monitors client requests for a specific page of another site (change them to your needs):

1 | varnishlog -c -m "RxURL:^/106/oneirokritis-gata$" |

If you need to monitor requests hitting your origin server (the backend), use TxURL in the regex like this:

1 | varnishlog -c -m "TxURL:^/106/oneirokritis-gata$" -m 'Hash:^www.oneirokriths123.com$' |

Monitoring requests from a single host

You might want to monitor all requests coming from a single client/host. This is easily monitored via varnishlog. With the following command, you can monitor all communication between the client and Varnish.

1 | varnishlog -c -m ReqStart:IP |

While the following command monitors the communication between Varnish and the backend server for requests made by the same client. Try reloading the resource in the browser. If it is cached correctly, you shouldn’t see any communication between Varnish and any backend servers. If you do see something printed there, inspect the HTTP caching headers and verify they are correct.

1 | varnishlog -b -m TxHeader:IP |

Monitoring user-agents with Varnish

1 | varnishtop -i RxHeader -C -I ^User-Agent |

Monitoring user IPs with Varnish

Varnishtop can be used to display an updated list of most frequent visitor IPs:

1 | varnishtop -c -f -i ReqStart |

While the above command will only process log entries written after it starts, you can use the -d option to force varnishtop to process old log entries on startup:

1 | varnishtop -c -d -f -i ReqStart |

Here’s a sample output:

1 2 3 4 5 6 7 8 9 | # varnishtop -c -1 -f -i ReqStart 330.00 ReqStart 46.177.81.147 329.00 ReqStart 94.70.28.149 312.00 ReqStart 62.1.192.110 306.00 ReqStart 46.198.183.242 306.00 ReqStart 85.74.238.50 296.00 ReqStart 78.87.112.66 295.00 ReqStart 94.64.147.235 ... |

How to check backend sanity for yourself

To test your backend health polling scheme, you can use varnishlog or varnishadm. With the form all you need is the command:

1 | varnishlog -i Backend_health -O |

If your backend is alive, you’ ll see something like this:

1 2 3 4 | [email protected]# varnishlog -i Backend_health -O 0 Backend_health - default Still healthy 4--X-RH 10 8 10 0.001564 0.001548 HTTP/1.1 200 OK 0 Backend_health - default Still healthy 4--X-RH 10 8 10 0.001548 0.001548 HTTP/1.1 200 OK 0 Backend_health - default Still healthy 4--X-RH 10 8 10 0.001325 0.001492 HTTP/1.1 200 OK |

If you prefer varnishadm, run it and type in the command debug.health:

1 2 3 4 5 6 7 8 9 10 11 | varnish> debug.health 200 Backend default is Healthy Current states good: 10 threshold: 8 window: 10 Average responsetime of good probes: 0.001556 Oldest Newest ================================================================ 4444444444444444444444444444444444444444444444444444444444444444 Good IPv4 XXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXX Good Xmit RRRRRRRRRRRRRRRRRRRRRRRRRRRRRRRRRRRRRRRRRRRRRRRRRRRRRRRRRRRRRRRR Good Recv HHHHHHHHHHHHHHHHHHHHHHHHHHHHHHHHHHHHHHHHHHHHHHHHHHHHHHHHHHHHHHHH Happy |

Now make the following to test if everything is OK. First, force Varnish warm up its cache by visiting some pages.

Then stop apache (service apache2 stop). Inside the varnishadm console, using the debug.health command you should see something like this:

1 2 3 4 5 6 7 8 | varnish> debug.health 200 Backend default is Sick Current states good: 0 threshold: 8 window: 10 Average responsetime of good probes: 0.001497 Oldest Newest ================================================================ ---------------------------------------------------------------- Happy |

Test Varnish performance and monitoring your headers

To test how Varnish is performing you can use the powefull ab tool (install apache2-utils package in Debian).

1 | ab -n 10000 -c 10 http://www.yourdomain.com/ |

Example:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 | root@eleni ~ # ab -n 1000 -c 10 http://www.oneirokriths123.com/ This is ApacheBench, Version 2.3 < $Revision: 655654 $> Copyright 1996 Adam Twiss, Zeus Technology Ltd, http://www.zeustech.net/ Licensed to The Apache Software Foundation, http://www.apache.org/ Benchmarking www.oneirokriths123.com (be patient) Completed 100 requests Completed 200 requests ... Completed 1000 requests Finished 1000 requests Server Software: apeiro Server Hostname: www.oneirokriths123.com Server Port: 80 Document Path: / Document Length: 62092 bytes Concurrency Level: 10 Time taken for tests: 0.577 seconds Complete requests: 1000 Failed requests: 0 Write errors: 0 Total transferred: 62526404 bytes HTML transferred: 62092000 bytes <strong>Requests per second: 1731.61 [#/sec] (mean)</strong> Time per request: 5.775 [ms] (mean) Time per request: 0.577 [ms] (mean, across all concurrent requests) Transfer rate: 105733.96 [Kbytes/sec] received Connection Times (ms) min mean[+/-sd] median max Connect: 0 1 0.3 1 3 Processing: 2 5 6.5 4 207 Waiting: 1 1 0.3 1 3 Total: 3 6 6.5 5 207 Percentage of the requests served within a certain time (ms) 50% 5 66% 6 75% 6 80% 6 90% 7 95% 7 98% 9 99% 10 100% 207 (longest request) |

Now, 1731 requests per second is not bad… By default, ab is not requesting gzip compression. We can enable it, using the -H parameter as below, to match more closely a real browser request. In that case, Varnish performs even better:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 | root@eleni ~ # ab -n 1000 -c 10 -H 'Accept-Encoding: gzip,deflate' http://www.oneirokriths123.com/ Benchmarking www.oneirokriths123.com (be patient) Completed 100 requests ... Completed 1000 requests Finished 1000 requests Server Software: apeiro Server Hostname: www.oneirokriths123.com Server Port: 80 Document Path: / Document Length: 13326 bytes Concurrency Level: 10 Time taken for tests: 0.187 seconds Complete requests: 1000 Failed requests: 0 Write errors: 0 Total transferred: 13830000 bytes HTML transferred: 13326000 bytes <strong>Requests per second: 5338.00 [#/sec] (mean)</strong> Time per request: 1.873 [ms] (mean) Time per request: 0.187 [ms] (mean, across all concurrent requests) Transfer rate: 72094.31 [Kbytes/sec] received Connection Times (ms) min mean[+/-sd] median max Connect: 0 0 0.2 0 1 Processing: 1 1 0.3 1 2 Waiting: 0 1 0.2 1 2 Total: 1 2 0.2 2 3 Percentage of the requests served within a certain time (ms) 50% 2 66% 2 75% 2 80% 2 90% 2 95% 2 98% 2 99% 2 100% 3 (longest request) |

But how well Varnish performs when the request has cookies also? You can check it using the curl tool. In the example below, the parameter –cookie sets our cookie, while the -I parameter tells curl to print out only response headers.

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 | root@eleni ~ # curl --cookie "mycookie=jim" -I http://www.supersyntages.gr HTTP/1.1 200 OK Server: cloudflare-nginx Date: Wed, 09 Jul 2014 11:49:41 GMT Content-Type: text/html; charset=utf-8 Connection: keep-alive Set-Cookie: __cfduid=d1e9666a1b044da53d9750a78054acfd81404906580975; expires=Mon, 23-Dec-2019 23:50:00 GMT; path=/; domain=.supersyntages.gr; HttpOnly Content-Language: el Cache-Control: public, max-age=600 Last-Modified: Wed, 09 Jul 2014 11:22:44 +0000 Expires: Sun, 19 Nov 1978 05:00:00 GMT Vary: Cookie,Accept-Encoding Age: 0 X-Cache: MISS it! X-Powered-By: Curiosity killed the cat - see linuxinside.gr CF-RAY: 14744cb313bf0a2a-VIE |

In this example, curl has printed all relevant information about the page we requested along with the headers we allowed in vlc_deliver function. So this page says to the client that it can cache the document for max-age=600 secs or 10 minutes. The most important header is X-Cache where Varnish inform us if the request was server from cache or has been transfered to our backend. As it seems, this time we missed cache but in subsequent calls the page is being served from cache:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 | root@eleni ~ # curl --cookie "anothercookie=example" -I http://www.supersyntages.gr/ HTTP/1.1 200 OK Server: cloudflare-nginx Date: Wed, 09 Jul 2014 11:58:29 GMT Content-Type: text/html; charset=utf-8 Connection: keep-alive Set-Cookie: __cfduid=dc2bb6e32495d1640c5fa9ae6b8ce345a1404907109270; expires=Mon, 23-Dec-2019 23:50:00 GMT; path=/; domain=.supersyntages.gr; HttpOnly Content-Language: el Cache-Control: public, max-age=600 Last-Modified: Wed, 09 Jul 2014 11:22:44 +0000 Expires: Sun, 19 Nov 1978 05:00:00 GMT Vary: Cookie,Accept-Encoding Age: 528 X-Cache: HIT! X-Cache-Hits: 1 X-Powered-By: Curiosity killed the cat - see linuxinside.gr CF-RAY: 14745998f2580a2a-VIE |

Purging (ban) content from Varnish cache

If you want to purge something from cache, use varnishadm’s ban command. For instance, the command below will purge from cache all objects belonging to a site:

1 | varnishadm "ban req.http.host ~ www.oneirokriths13.com" |

Usually we won’t need -and really you should not- purge an entire website during high traffic, unless your back-end server has got balls of steel. Varnish ban lets us purge by cherry-picking specific items. Since ban accepts regex, we can create more specific bans, i.e. purge all .css files only for a given website:

1 | varnishadm "ban req.http.host ~ www.how-to-seo.gr && req.url ~ .css" |

Varnish and WordPress

If you can’t login to your wordpress site, after installing Varnish, this is probably a cookie problem. If you google the problem, you’ll find many threads discussing the problem with various solutions. The most usual solution offered is to add some lines to vcl_recv. The solution I came up was to stop unsetting cookies for wp-admin and wp-login urls and pass them directly to the backend (see lines 47,48 and 106 in vcl_recv code). You might also want to check out the Varnish plugin for WordPress.

How to exclude a domain or virtual host from Varnish Cache

If you wish to exclude from Varnish caching a single domain hosted on the origin server, simply add an if test in vcl_recv function. It should look like this (change the domain to your own of course). The set req.backend = default line is optional.

1 2 3 4 | if (req.http.host == "www.linuxinsider.gr") { set req.backend = default; return (pass); } |

How to make Varnish Cache work nicely with Google’s pagespeed apache module

Google’s mod_pagespeed is a great webserver module which speeds up your sites while reduces page load times. It automagically applies some of the best web performance practices (such as compression, image/css/js minification and optimization, etags utilization etc) to all your pages and assets (js, css, images, etc) without modifying anything on your CMS/backend. It includes more than 40 configurable optimization filters, although you can see a great deal of performance improvement just by enabling it.

The problem is that mod_pagespeed does not work well (not out of the box I mean) with Varnish because pagespeed optimizes all web resources (i.e. images) on the fly but not right away. So in these cases Varnish will cache and serve a partially optimized resource. The problem here is that we need a way mod_pagespeed and Varnish to talk to each other so that Varnish caches only fully optimized pages. This is done through an pagespeed.conf option called ModPagespeedBlockingRewriteKey which forces mod_pagespeed to always deliver fully optimized resources.

In mod_pagespeed conf file, enable that option with some custom-key of yours i.e “givemyfullyoptimizedpagesplease”. Add a line like this near the end of the file:

ModPagespeedBlockingRewriteKey givemyfullyoptimizedpagesplease

In Varnish’s vlc_recv, set a request-header “X-PSA-Blocking-Rewrite: custom-key”, using with the same key obviously:

set req.http.X-PSA-Blocking-Rewrite = “givemyfullyoptimizedpagesplease”;

That request-header will be send to mod_pagespeed which in turn will know that it should always deliver resources fully optimized (see explanation here). The only downside is that when you have a cold server cache, it may cost some seconds to the first visitor after the Varnish cache has expired. But you can fix this with a wget crawler (such as ‘wget YOURSITE -H X-PSA-Blocking-Rewrite:YOUR_KEY’ ) freshening periodically your cache.

This is really a workaround. If you mean to seriously engage with mod_pagespeed follow the guidelines (i.e. sending a purge request to Varnish) described in the module’s Downstream Caching page. The configuration mentioned there is also addressing the issue of multiple user-agents which will fragment the cache of Varnish.

Upgrading to Varnish 4

TODO

Read more about Varnish Cache

For beginners:

Varnish configuration templates

VCL Basics

VCL Reference

Varnish VCL Examples

Varnish cache invalidation and purging

For advanced usage:

Varnish Best Practices

Varnish Performance Tips

High-end Varnish tuning

Upgrading to Varnish 4

Cache popular content longer

Longer caching with Varnish

rfc2616-sec14: Modifications of the Basic Expiration Mechanism (Cache-Control)

To use with Drupal:

Lullabot’s excellent post about Varnish and Drupal

Configure Varnish with Drupal captcha module

Pressflow: Modules that break Varnish cache Drupal’s Minimum Cache Lifetime explained

Varnish 3.0 Setup in HA for Drupal 7 with Redhat (Part 2)

To use with WordPress:

WordPress & Varnish

http purge module for WordPress

WordPress performance with Varnish

Useful webspeed testers

Website speed test

GTmetrix

Example default.vcl

varnish-for-drupal vcl

Lullabot’s default.vcl

Nick Taylor’s default.vcl

annoyingone

Thanks for the detailed howto. Jfyi, TTL + 6h would be 370 minutes not 610 minutes

Dimitris Kalamaras

And thank you for the patch 🙂

Ayberk Kimsesiz

Is it also possible to add a setting to this file for XenForo forum (domain.com/forum)?

Thanks.

/* SET THE HOST AND PORT OF WORDPRESS

* *********************************************************/

vcl 4.0;

import std;

backend default {

.host = “******”;

.port = “8080”;

.connect_timeout = 600s;

.first_byte_timeout = 600s;

.between_bytes_timeout = 600s;

.max_connections = 800;

}

# SET THE ALLOWED IP OF PURGE REQUESTS

# ##########################################################

acl purge {

“localhost”;

“127.0.0.1”;

}

#THE RECV FUNCTION

# ##########################################################

sub vcl_recv {

# set realIP by trimming CloudFlare IP which will be used for various checks

set req.http.X-Actual-IP = regsub(req.http.X-Forwarded-For, “[, ].*$”, “”);

# FORWARD THE IP OF THE REQUEST

if (req.restarts == 0) {

if (req.http.x-forwarded-for) {

set req.http.X-Forwarded-For =

req.http.X-Forwarded-For + “, ” + client.ip;

} else {

set req.http.X-Forwarded-For = client.ip;

}

}

# Purge request check sections for hash_always_miss, purge and ban

# BLOCK IF NOT IP is not in purge acl

# ##########################################################

# Enable smart refreshing using hash_always_miss

if (req.http.Cache-Control ~ “no-cache”) {

if (client.ip ~ purge || !std.ip(req.http.X-Actual-IP, “1.2.3.4”) ~ purge) {

set req.hash_always_miss = true;

}

}

if (req.method == “PURGE”) {

if (!client.ip ~ purge || !std.ip(req.http.X-Actual-IP, “1.2.3.4”) ~ purge) {

return(synth(405,”Not allowed.”));

}

return (purge);

}

if (req.method == “BAN”) {

# Same ACL check as above:

if (!client.ip ~ purge || !std.ip(req.http.X-Actual-IP, “1.2.3.4”) ~ purge) {

return(synth(403, “Not allowed.”));

}

ban(“req.http.host == ” + req.http.host +

” && req.url == ” + req.url);

# Throw a synthetic page so the

# request won’t go to the backend.

return(synth(200, “Ban added”));

}

# Unset cloudflare cookies

# Remove has_js and CloudFlare/Google Analytics __* cookies.

set req.http.Cookie = regsuball(req.http.Cookie, “(^|;\s*)(_[_a-z]+|has_js)=[^;]*”, “”);

# Remove a “;” prefix, if present.

set req.http.Cookie = regsub(req.http.Cookie, “^;\s*”, “”);

# For Testing: If you want to test with Varnish passing (not caching) uncomment

# return( pass );

# FORWARD THE IP OF THE REQUEST

if (req.restarts == 0) {

if (req.http.x-forwarded-for) {

set req.http.X-Forwarded-For =

req.http.X-Forwarded-For + “, ” + client.ip;

} else {

set req.http.X-Forwarded-For = client.ip;

}

}

# DO NOT CACHE RSS FEED

if (req.url ~ “/feed(/)?”) {

return ( pass );

}

## Do not cache search results, comment these 3 lines if you do want to cache them

if (req.url ~ “/\?s\=”) {

return ( pass );

}

# CLEAN UP THE ENCODING HEADER.

# SET TO GZIP, DEFLATE, OR REMOVE ENTIRELY. WITH VARY ACCEPT-ENCODING

# VARNISH WILL CREATE SEPARATE CACHES FOR EACH

# DO NOT ACCEPT-ENCODING IMAGES, ZIPPED FILES, AUDIO, ETC.

# ##########################################################

if (req.http.Accept-Encoding) {

if (req.url ~ “\.(jpg|png|gif|gz|tgz|bz2|tbz|mp3|ogg)$”) {

# No point in compressing these

unset req.http.Accept-Encoding;

} elsif (req.http.Accept-Encoding ~ “gzip”) {

set req.http.Accept-Encoding = “gzip”;

} elsif (req.http.Accept-Encoding ~ “deflate”) {

set req.http.Accept-Encoding = “deflate”;

} else {

# unknown algorithm

unset req.http.Accept-Encoding;

}

}

# PIPE ALL NON-STANDARD REQUESTS

# ##########################################################

if (req.method != “GET” &&

req.method != “HEAD” &&

req.method != “PUT” &&

req.method != “POST” &&

req.method != “TRACE” &&

req.method != “OPTIONS” &&

req.method != “DELETE”) {

return (pipe);

}

# ONLY CACHE GET AND HEAD REQUESTS

# ##########################################################

if (req.method != “GET” && req.method != “HEAD”) {

return (pass);

}

# OPTIONAL: DO NOT CACHE LOGGED IN USERS (THIS OCCURS IN FETCH TOO, EITHER

# COMMENT OR UNCOMMENT BOTH

# ##########################################################

if ( req.http.cookie ~ “wordpress_logged_in” ) {

return( pass );

}

# IF THE REQUEST IS NOT FOR A PREVIEW, WP-ADMIN OR WP-LOGIN

# THEN UNSET THE COOKIES

# ##########################################################

if (!(req.url ~ “wp-(login|admin)”)

&& !(req.url ~ “&preview=true” )

){

unset req.http.cookie;

}

# IF BASIC AUTH IS ON THEN DO NOT CACHE

# ##########################################################

if (req.http.Authorization || req.http.Cookie) {

return (pass);

}

# IF YOU GET HERE THEN THIS REQUEST SHOULD BE CACHED

# ##########################################################

return (hash);

# This is for phpmyadmin

if (req.http.Host == “ki1.org”) {

return (pass);

}

if (req.http.Host == “mysql.ki1.org”) {

return (pass);

}

}

# HIT FUNCTION

# ##########################################################

sub vcl_hit {

# IF THIS IS A PURGE REQUEST THEN DO THE PURGE

# ##########################################################

if (req.method == “PURGE”) {

#

# This is now handled in vcl_recv.

#

# purge;

return (synth(200, “Purged.”));

}

return (deliver);

}

# MISS FUNCTION

# ##########################################################

sub vcl_miss {

if (req.method == “PURGE”) {

#

# This is now handled in vcl_recv.

#

# purge;

return (synth(200, “Purged.”));

}

return (fetch);

}

# FETCH FUNCTION

# ##########################################################

sub vcl_backend_response {

# I SET THE VARY TO ACCEPT-ENCODING, THIS OVERRIDES W3TC

# TENDANCY TO SET VARY USER-AGENT. YOU MAY OR MAY NOT WANT

# TO DO THIS

# ##########################################################

set beresp.http.Vary = “Accept-Encoding”;

# IF NOT WP-ADMIN THEN UNSET COOKIES AND SET THE AMOUNT OF

# TIME THIS PAGE WILL STAY CACHED (TTL)

# ##########################################################

if (!(bereq.url ~ “wp-(login|admin)”) && !bereq.http.cookie ~ “wordpress_logged_in” ) {

unset beresp.http.set-cookie;

set beresp.ttl = 52w;

# set beresp.grace =1w;

}

if (beresp.ttl 0) {

set resp.http.X-Cache = “HIT”;

# IF THIS IS A MISS RETURN THAT IN THE HEADER

# ##########################################################

} else {

set resp.http.X-Cache = “MISS”;

}

}